Knowledge is central to intelligence. We need it to use or understand language; to make decisions; to recognize objects; to interpret situations; to plan strategies. We store in our memories millions of pieces of knowledge that we use daily to make sense of the world and our interactions with it.

Some of the knowledge we possess is factual. We know what things are and what they do. This type of knowledge is known as declarative knowledge. We also know how to do things: procedural knowledge. For example, if we consider what we know about the English language we may have some declarative knowledge that the word tree is a noun and that tall is an adjective. These are among the thousands of facts we know about the English language.

However, we also have procedural knowledge about English. For example, we may know that in order to provide more information about something we place an adjective before the noun.

Similarly, imagine you are giving directions to your home. You may have declarative knowledge about the location of your house and its transport links (for example, “my house is in Golcar”, “the number 301 bus runs through Golcar”, “Golcar is off the Manchester Road”). In addition you may have procedural knowledge about how to get to your house (“Get on the 301 bus”).

Another distinction that can be drawn is between the specific knowledge we have on a particular subject (domain-specific knowledge) and the general or “common-sense” knowledge that applies throughout our experience (domain-independent knowledge). The fact “the number 301 bus goes to Golcar” is an example of the former: it is knowledge that is relevant only in a restricted domain – in this case Huddersfield’s transport system. New knowledge would be required to deal with transport in any other city. However, the knowledge that a bus is a motorized means of transport is a piece of general knowledge which is applicable to buses throughout our experience.

General or common-sense knowledge also enables us to interpret situations accurately. For example, imagine someone asks you “Can you tell me the way to the station?”. Your common-sense knowledge tells you that the person expects a set of directions; only a deliberately obtuse person would answer literally “yes”! Similarly there are thousands if not millions of “facts” that are obvious to us from our experience of the world, many acquired in early childhood. They are so obvious to us that we wouldn’t normally dream of expressing them explicitly. Facts about age: a person’s age increments by one each year, children are always younger than their parents, people don’t live much longer than 100 years; facts about the way that substances such as water behave; facts about the physical properties of everyday objects and indeed ourselves – this is the general or “common” knowledge that humans share through shared experience and that we rely on every day.

Just as we need knowledge to function effectively, it is also vital in artificial intelligence. As we saw earlier, one of the problems with ELIZA was lack of knowledge: the program had no knowledge of the meanings or contexts of the words it was using and so failed to convince for long. So the first thing we need to provide for our intelligent machine is knowledge. As we shall see, this will include procedural and declarative knowledge and domain-specific and general knowledge. The specific knowledge required will depend upon the application. For language understanding we need to provide knowledge of syntax rules, words and their meanings, and context; for expert decision making, we need knowledge of the domain of interest as well as decision-making strategies. For visual recognition, knowledge of possible objects and how they occur in the world is needed. Even simple game playing requires knowledge of possible moves and winning strategies.

1.3 Representing knowledge

We have seen the types of knowledge that we use in everyday life and that we would like to provide to our intelligent machine. We have also seen something of the enormity of the task of providing that knowledge. However, the knowledge that we have been considering is largely experiential or internal to the human holder. In order to make use of it in AI we need to get it from the source (usually human but can be other information sources) and represent it in a form usable by the machine. Human knowledge is usually expressed through language, which, of course, cannot be accurately understood by the machine. The representation we choose must therefore be both appropriate for the computer to use and allow easy and accurate encoding from the source.

We need to be able to represent facts about the world. However, this is not all. Facts do not exist in isolation; they are related to each other in a number of ways. First, a fact may be a specific instance of another, more general fact. For example, “Spotty Dog barks” is a specific instance of the fact “all dogs bark” (not strictly true but a common belief). In a case like this, we may wish to allow property inheritance, in which properties or attributes of the main class are inherited by instances of that class. So we might represent the knowledge that dogs bark and that Spotty Dog is a dog, allowing us then to deduce by inheritance the fact that Spotty Dog barks. Secondly, facts may be related by virtue of the object or concept to which they refer. For example, we may know the time, place, subject and speaker for a lecture and these pieces of information make sense only in the context of the occasion by which they are related. And of course we need to represent procedural knowledge as well as declarative knowledge.

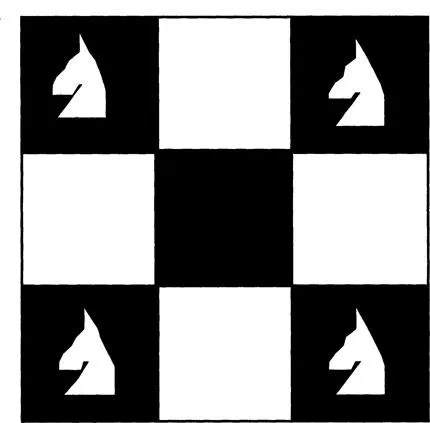

It should be noted that the representation chosen can be an important factor in determining the ease with which a problem can be solved. For example, imagine you have a 3 × 3 chess board with a knight in each corner (as in Fig. 1.1). How many moves (that is, chess knight moves) will it take to move each knight round to the next corner?

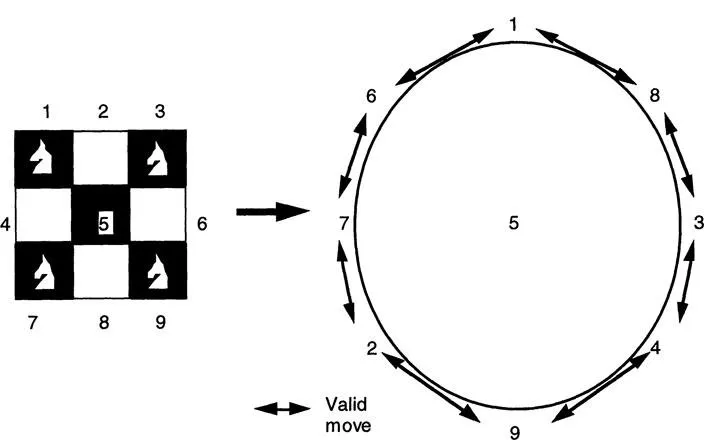

Looking at the diagrammatic representation in Figure 1.1, the solution is not obvious, but if we label each square and represent valid moves as adjacent points on a circle (see Fig. 1.2), the solution becomes more obvious: each knight takes two moves to reach its new position so the minimum number of moves is eight.

In addition, the granularity of the representation can affect its usefulness. In other words, we have to determine how detailed the knowledge we represent needs to be. This will depend largely on the application and the use to which the knowledge will be put. For example, if we are building a knowledge base about family relationships we may include a representation of the definition of the relation “cousin” (given here in English but easily translatable into logic, for example):

your cousin is a child of a sibling of your parent.

However, this may not be enough information; we may also wish to know the gender of the cousin. If this is the case a more detailed representation is required. For a female cousin:

your cousin is a daughter of a sibling of your parent

or a male cousin

your cousin is a son of a sibling of your parent.

Similarly, if you wanted to know to which side of the family your cousin belongs you would need different information: from your father’s side

your cousin is a child of a sibling of your father

or your mother’s

your cousin is a child of a sibling of your mother.

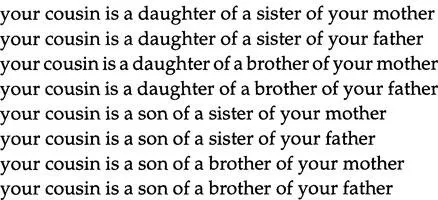

A full description of all the possible variations is given in Figure 1.3. Such detail may not always be required and therefore may in some circumstances be unnecessarily complex.

There are a number of knowledge representation methods that can be used. Later in this chapter we will examine some of them briefly, and ...