![]()

CHAPTER 1

Introduction

Jerry J. Battista

London Health Sciences Centre and University of Western Ontario

CONTENTS

1.1 Overview

1.1.1 A Brief History of Radiation Treatment Planning

1.1.2 Expanding Role of Dose Computation Algorithms

1.1.3 Where Physics Intersects Biology

1.1.3.1 Cell Survival Curves

1.1.3.2 Biologically Effective Dose

1.1.3.3 Tumour Control and Normal Tissue Complication Probability

1.2 Required Accuracy in Delivered Dose

1.2.1 Using a Systems Model

1.2.2 Radiobiological Considerations

1.2.3 Physical Considerations

1.2.3.1 Dose Auditing

1.2.4 Clinical Considerations

1.2.4.1 Contouring Uncertainty

1.2.4.2 Dose-Volume Histograms

1.2.4.3 Clinical Detection of Dose Inaccuracy

1.2.4.4 Loco-Regional Control and Patient Survival

1.3 Evaluation of Algorithm Performance

1.3.1 Gamma Testing

1.3.2 Computational Speed

1.4 Summary and Scope of Book

1.1 OVERVIEW

ALL forms of cancer therapy are aimed at a common goal – to eradicate tumours while minimizing collateral injury to surrounding normal organs. During the early days of radiotherapy, this was certainly a most challenging proposition often described as aiming invisible radiation at an invisible target. Fortunately, radiation oncology was one of the first medical specialities to embrace computerization and it remains poised to take full advantage of emerging technology. One of the largest impacts was the development of digital three-dimensional (3D) imaging. The rapid adoption of x-ray computed tomography (CT) not only improved the targeting of disease, but it also provided quantitative maps of in vivo tissue densities for accurate computation of dose distributions (Van Dyk and Battista 2014). Ultrasound, magnetic resonance imaging (MRI) and radioisotope imaging all contribute to detecting internal disease. Further advances will continue to impact the clinical practice of radiation oncology (Jaffray et al. 2018). As an example, CT or MRI systems have been integrated with treatment machines for dose delivery of unprecedented precision (Lagendijk et al. 2014).

The Merriam-Webster dictionary defines an algorithm as “a procedure for solving a mathematical problem in a finite number of steps that frequently involves repetition of an operation; broadly, a step-by-step procedure for solving a problem or accomplishing some end, especially by means of a computer”. Dose computation algorithms constitute critical elements of a radiation treatment planning system. While ergonomic software tools for displaying, contouring, or aligning images are important, a clinically-viable treatment plan must rely on a trustworthy dose algorithm. Inaccurate dosimetry can mislead clinical decision-making and taint the interpretation of clinical trials, potentially affecting the lives of many cancer patients. While la raison d’étre for dose algorithms has been the optimization of dose distributions during treatment planning, the range of applications is now rapidly expanding. Algorithms are used to re-compute dose distributions du jour to adapt to changing anatomy seen by on-board imaging. When coupled with deformable image registration algorithms, the accumulation of dose in mobile tissue voxels can be tracked and dose can be re-optimized throughout a course of treatment. In the long term, highly controlled dose delivery will facilitate development and testing of new human radiobiology models.

1.1.1 A Brief History of Radiation Treatment Planning

Computerization of the radiotherapy planning process has a rich historical track (Rinks 2012; Cunningham 1989). External beam treatment planning in the 1950s involved manual overlay of semi-transparent isodose charts onto an external contour of the patient measured mechanically in a single plane and automatically re-plotted onto paper using a pantograph device. The treatment planner would then join the dots of equal dose sums to map the composite isodose pattern from several beams. One of the earliest forms of automated treatment planning was developed by Tsien in 1955 (Tsien 1955). Isodose data were encoded on a stack of punch cards for data entry. The external contour of the patient was drawn in polar coordinates and beam directions were decided. The beam energy was most often selected as cobalt-60 (Smith et al. 1964). The punch cards were sorted automatically by an adding machine that summed up the dose at a grid of points within the patient’s external contour. The isodose distribution was then plotted and a simple treatment plan could be produced in 15 minutes rather than a few hours!

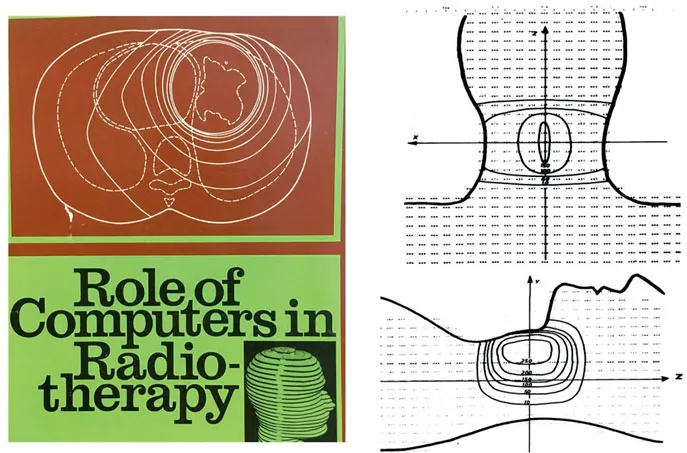

A panel of consultants met at the International Atomic Energy Agency (IAEA) in Vienna in 1965 and 1967 to exchange knowledge on dose calculation methods and general applications of computers in radiotherapy (IAEA 1966, 1968). The front cover of their final report is shown in Figure 1.1. The report described potential roles of computers in radiotherapy departments, including clinical treatment planning for teletherapy and brachytherapy. A list of thirty computer programs was compiled. One article described a Monte Carlo simulation of multiple photon scattering within a water absorber – a precursor to future modelling of scatter kernels. The pivotal idea of splitting radiation dose into its primary and scattered components was dated back to the kilovoltage era. It was re-asserted as an important strategy in advancing dose computation capabilities forward. The compendium also listed programs for radiobiological optimization of treatment plans. The panel chairman concluded his lead article with the following remarkable foresight and challenge:

“It is likely that, at present, the weakest link…is now the information about the patient: localization, extent of tumour and body inhomogeneities, as well as the means of accurate and reproducible beam direction. It is very likely that the computer can also aid this.”

Dr. J.R Cunningham

A virtual humanoid phantom was also described in the proceedings and it could be scaled to account for the external size of a particular patient (Busch 1968). This phantom is seen on the book cover (Figure 1.1) and this pre-dated 3D displays and printing. It foreshadowed the use of anatomical transverse slices for individual patients developed later in CT scanning during the 1970–80 period of accelerated growth. A sample 3D dose distribution is shown in Figure 1.1. The author noted that “the program was too slow and would be re-written in FORTRAN to run on an IBM 7040 computer. The new program will be able to calculate any therapy field by computing elementary fields of 1 × 1 cm2.” This beam decomposition technique is essentially the pencil beam model of later decades.

Atlases of radiation dose distributions were later published by the IAEA (MacDonald 1965), including those used for rotational therapy which is a revived mode of contemporary treatment delivery (e.g. VMAT and tomotherapy). Early developments were aimed at improving international access to treatment planning resources by sharing cobalt-60 data sets. This included rapid retrieval of measured data for each beam size, with secondary corrections for the patient’s external shape and major internal tissue inhomogeneities such as in lungs. In the mid-1960s, powerful computer workstations were still not available within the constraints of hospital space and budgets. An interim solution was provided through the Ontario Cancer Treatment and Research Foundation in Canada. Remote terminals were installed across a provincial network of several cancer centres served by a time-shared central IBM computer. Treatment planning software was developed at Princess Margaret Hospital in Toronto and shared across the cancer centres. Isodose distributions were printed with alpha-numeric characters on noisy teletype terminals. A dedicated program console (PC) eventually emerged for stand-alone use with interactive input devices and monochrome graphics display. By the early 1970s, this type of workstation evolved into commercial products for 2-dimensional (2D) planning, including the RAD-8, PC-12 (Artronix), and TP-11 (AECL) systems. In time, video displays and printers were introduced with grey-tone and colour capabilities.

Figure 1.1: IAEA report that forecast the role of computers in the field of radiation oncology (IAEA 1968). A virtual humanoid phantom, shown on the cover, was used for early 3D dose calculations (Busch 1968).

The next major thrust towards 3D treatment planning came with the introduction of CT head scans in 1972. The applications to cancer diagnosis and treatment planning became obvious (Battista et al. 1980). Whole body systems were then designed by numerous vendors such as General Electric, Picker, Siemens, Philips, Toshiba, and Artronix. Some were installed in cancer treatment centres, with specialized “beam’s-eye-view” software, gradually replacing traditional radiographic treatment simulators. The slice-by-slice images were ideal for contouring of targets and organs-at-risk, and meshed well within existing 2D treatment planning software architecture. The assumption of a homogeneous patient composed solely of waterlike tissue, however, became untenable. The limitations of 2D approaches led to investments by companies and national agencies, such as the National Cancer Institute in the USA, the CART group initiative in Scandinavia (i.e. Helax system), and the OMEGA group in North America. Major upgrades to dose computation capabilities followed with consideration of heterogeneous tissue described in 3-dimensional data structures (Sontag and Cunningham 1978; Wong et al. 1984). The similarity of concepts used in medical image reconstruction and dose optimization through beam intensity modulation was noted (Bortfeld et al. 1990). A new generation of convolution algorithms soon developed (Mackie et al. 1984). For higher energy x-ray beams, the spread of energy away from x-ray interaction sites required consideration of the range of more energetic electrons (Mackie et al. 1988; Ahnesjö et al. 1987; Yu et al. 1995). Cost-effective workstations with enhanced computing power caused a resurgence of interest in Monte Carlo simulations (Andreo 1991). This technique was implemented for modelling radiation particles emerging from linear accelerators (Rogers et al. 1995, 2009), providing the data necessary to bootstrap the new breed of algorithms. Figure 1.2 shows the annual publication rate for the current generation of dose algorithms. Between 1980 and 2017...