![]()

1 | Energy-Efficient Photonic Interconnects for Computing Platforms |

| Odile Liboiron-Ladouceur, Nicola Andriolli, Isabella Cerutti, Piero Castoldi, and Pier Giorgio Raponi |

CONTENTS

1.1 Introduction

1.2 Photonic Technology Solutions in Computing Platforms

1.2.1 Photonic Point-to-Point Links

1.2.2 Single-Plane Photonic Interconnection Networks

1.2.2.1 Space Switching

1.2.2.2 Wavelength Switching

1.2.2.3 Time Switching

1.2.3 Multiplane Photonic Interconnection Networks

1.3 Energy-Efficient Photonic Interconnection Networks

1.3.1 Energy-Efficient Devices

1.3.2 Energy-Efficient Design of Systems and Architectures

1.3.3 Energy-Efficient Usage

1.3.4 Energy-Efficient Scalability

1.4 RODIN: Best Practice Example

1.4.1 Space-Wavelength (SW) and Space-Time (ST) Switched Architectures

1.4.2 Energy-Efficiency Analysis Architecture

1.5 Conclusion

Reference

1.1 INTRODUCTION

Impressive data processing and storage capabilities have been reached through the continuous technological improvement in integrated circuits. Whereas the integration improvement has been closely following Moore’s law (doubling of the number of devices per unit of area on a chip every 18 to 24 months), the performance of single computational systems has essentially hit a power wall [28]. To leverage the computational performance, explicit parallelism is exploited at the processor level as well as at the system level to realize high performance computing platforms.

Computing platforms of different types offer tremendous computing and storage capabilities, suitable for scientific and business applications. A notable example is given by supercomputers, the fastest computing platforms, used for running highly calculation-intensive applications in the field of physics, astronomy, mathematics, and life science [25]. Another relevant example is given by data centers and server farms, whose emergence has been driven by the development of the Internet. Data centers not only enable fast retrieval of stored information for users connected to the Internet, but they can also support advanced applications (such as cloud computing) that offer computational and storage services. The increasing quest for information and computational capacity to support such applications is driving the performance growth, which is enabled by the parallelism.

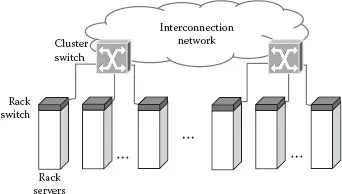

The parallelism allows application tasks to be executed in parallel across multiple distinct processors, leading to a reduction in the execution time and to an increase in the computing platform utilization. To benefit from such advantages, the computing systems should be interconnected through a high-capacity interconnection network. In currently deployed data centers and server farms, the parallelism is achieved by tightly clustering thousands of homogenous servers, either high-end servers or more often commodity computers depending on the computing platforms type [3]. Typically, the numerous racks hosting few tens of servers are connected through a rack switch, in turn connected to a cluster switch, as shown in Figure 1.1, so that each server can communicate with any other server. The communication infrastructure consists of electrical switches typically based on Ethernet (for lower cost and flexibility) or Infiniband protocol (for higher performance). Similarly, in supercomputers, an interconnection network with high throughput and low latency is required for connecting thousands of compute nodes [6]. Recently, the distinction between the two most prominent computing platforms, data centers and supercomputers, has become blurry. Indeed, high-performance scientific computation has been demonstrated in data centers by running tasks in parallel through cloud computing, but the performance of the communication infrastructure is found to lag behind the expectations [45]. Indeed, the performance requisites of high throughput and low latency are stringent especially for the high-performance computations.

FIGURE 1.1 Generic interconnection network of a high-performance computing platform.

In the last decade, the main bottleneck of computing infrastructure has shifted from the compute nodes to the performance of the communication infrastructure [15]. As computing platforms scale (i.e., increase in the number of servers and in the computational capacity), the requisites of high throughput and low latency are becoming more difficult to achieve and ensure. Indeed, electronic switches are bandwidth limited to the transmission line rate, whereas the number of ports can only scale to a few hundreds per switch. To overcome these limitations, two or more levels of interconnection (i.e., one intrarack, and one or more interrack levels) are required to enable a full connectivity among all the servers. However, the bisectional bandwidth of the interrack level is typically limited to a fraction of the total communication bandwidth. Therefore, to meet the throughput and latency requisites, innovative interconnection solutions that offer a higher degree of scalability in ports and line rate are necessary.

The increase in the size of computing platforms also causes a dramatic increase in power consumption, which may seriously impair further scaling [28]. Currently, the power consumption of large computing platforms is increasing at a yearly rate of about 15% to 20% for data centers [5,7] and up to 50% in supercomputers [26]. According to recent studies [17], the overall power consumed by data centers worldwide has already reached the power consumption level of an entire country such as Argentina or the Netherlands. Within a data center, the communication infrastructure is estimated to drain about 10% of the overall power assuming a full utilization of the servers [1]. However, this assumption is unlikely to occur in today’s computing platforms, as the servers are typically underutilized since redundancy is added to ensure good performance in case of failures, especially in computing platforms made out of commodity hardware [3]. When considering recent improvements of the server design to make them more energy proportional (i.e., power consumption proportional to utilization), the network power consumption is expected to reach levels up to 50% of the overall power consumption [1]. Thus, energy-efficient and energy-proportional interconnection solutions are sought.

This chapter explains how photonic technologies can help meet the two crucial challenges of today’s interconnection networks for computing platforms, namely, the scalability and energy efficiency. State-of-the-art photonic technologies suitable for connecting and replacing the electronic switches are introduced and discussed in Section 1.2. In particular, interconnection networks realized with photonic devices are proposed for optically switching data between compute nodes or servers of a computing platform. The fundamental strategies for improving the scalability and energy efficiency of optical interconnection networks are presented and explained with examples in Section 1.3. Finally, in Section 1.4, a case study built from recent results obtained in the RODIN* project is used to demonstrate the proposed strategies and the potential benefits of optical interconnection networks for the next-generation computing platforms.

1.2 PHOTONIC TECHNOLOGY SOLUTIONS IN COMPUTING PLATFORMS

To overcome the limitations of electronics, photonic technology solutions for substituting the individual point-to-point links or for replacing the entire electrical switching architectures have been proposed. Photonic communication systems have been shown to achieve large capacity, with low attenuation and crosstalk, and benefit from the data rate transparency of the optical physical layer. Such features are making the photonic point-to-point links excellent replacements of copper cables interconnecting today’s electronic switches. Indeed, photonic point-to-point links are already highly used in the new generations of computing platforms [29]. The distance to cover depends on the interconnection level within the system (Figure 1.1). Interconnection of racks over distances up to a few hundred meters can be realized with multimode fiber links. Switching, however, remains in the electronic domain.

To mitigate the current electronic switching limitations, the introduction of optics within the optical interconnection networks has been proposed by the scientific community, and has been shown to achieve greater scalability and throughput compared to electronic switches [14,39]. The design and realization of all-optical interconnection remains, however, challenging due to the lack of effective solutions for all-optical buffering and processing, and due to the nonnegligible power consumption [49].

This section presents the available photonic solutions for both point-to-point links and interconnection networks, the architectural design, and the control strategies for photonic interconnection networks.

1.2.1 PHOTONIC POINT-TO-POINT LINKS

Compared to electrical links, photonic point-to-point links enable much greater aggregated bandwidth-distance product, allowing increasing communication capacity and reach. Telecommunication systems have made good use of this attribute with aggregated bandwidth-distance in the order of 106 Gb/s-m [10,53]. In computing platforms, the number of shared elements and their physical separation distance forced photonic technology development to take a different tangent where bandwidth density requirement becomes as important as the aggregated bandwidth.

A photonic point-to-point consists of the optical source that is modulated by the associated electrical circuitry (e.g., driver), the optical channel, and the photodetector with its associated electrical circuitry (e.g., transimpedance amplifier). For better energy efficiency, photonic point-to-point links not requiring power hungry clock recovery, SerDes, or digital-to-analog converters (DACs) and analog-to-digital converters (ADCs) are preferred. Hence, modulated data rates are often limited to the electrical line rate and are sometimes not resynchronized. Optical sources based on uncooled VCSEL are used for spatial communication with multicore fibers (e.g., ribbons, or multifiber arrays) with one optical carrier per fiber core. Modulated VCSEL (vertical-cavity surface-emitting laser) sources have been shown to meet the electrical line rate of 25 Gb/s [24,29] while being compact, low-cost, and energy efficient. After multimode transmission, pin photodetectors convert the signal back to the electrical domain. In recent years, optical active cables (OAC) have been heavily used and have become the prominent solution in the top supercomputers [48]. OACs integrate the optoelectrical (OE) components making them compatible with the electrical sockets at the edge of a server’s motherboard and of the electronic switch ports. Futhermore, OAC development enables compatibility with the latest inte...