Section Introduction: The Nature of Processing

Listening is a dynamic process with contributions from multiple cognitive operations. This section defines listening in terms of four overlapping types of processing: neurological processing, linguistic processing, semantic processing, and pragmatic processing. A comprehensive understanding of listening needs to account for all four types of processing, indicating how these processes integrate and complement each other.

Chapter 1 describes neurological processing as involving hearing, awareness, consciousness, and attention, and activating a kind of experiential field in which all other processes operate. This initial chapter describes the underlying universal nature of neurological processing and the way it is organized and experienced in all humans, for users of all languages. The chapter also attempts to outline the nature of individual differences in neurological processing, in order to explain the personalized nature of the listening experience.

Chapter 2 describes linguistic processing, the aspect of listening that involves input from a linguistic source—what most language users would consider the fundamental aspect of listening to language. This second chapter begins with analysis of how speech is perceived and proceeds to describe the way in which listeners make sense of sound, through identifying units of spoken language, using prosodic features to group units of speech, parsing speech into grammatical units, and recognizing words as representative of ideas.

Chapter 3 details semantic processing, the aspect of listening that seeks comprehension and integrates memory and prior experience into understanding. This third chapter focuses on comprehension as a functional goal of listening, involving constructing meaning and activating appropriate memory structures that support what is being understood. Chapter 4 focuses on pragmatic processing, that aspect of listening that is embedded in a social and cultural context. While closely related to semantic processing, pragmatic processing centers around the notion of relevance—the idea that listeners take an active role in identifying relevant factors in verbal and non-verbal input and inject their own “agenda” into the process of constructing meaning.

Chapter 5 summarizes listening processing from the perspective of artificial intelligence, outlining the ways that computers “listen” using the same types of linguistic, semantic, and pragmatic processing that humans employ. Chapter 6 serves as a pivotal chapter in the book, delineating the ways that these convergent listening processes are developed, in both first and second language acquisition.

Taken as a whole, Section I provides the kind of “basic research” that will help the reader understand the cognitive processes involved in listening more fully. The concepts explored in Section I will be utilized in the subsequent sections on teaching (Section II) and research (Section III).

1.1 Hearing

A natural starting point for an exploration of listening in teaching and research is to consider hearing, the basic physical and neurological systems and processes that are involved in hearing sound. We all experience hearing as if it were a separate sense, a self-contained system that we can turn on or off at will. However, hearing is part of a complex brain network organization that is interdependent with multiple neurological systems (Poeppel & Overath, 2014).

Though hearing cannot be separated from the overall brain network of which it is part, hearing is an identifiable system with an isolable function. Hearing is the primary physiological system that allows for reception and conversion of sound waves. Sound waves are experienced as minute pressure pulses and can be measured in pascals (Force over an Area: p = F/A). The normal threshold for human hearing is about 20 micropascals—equivalent to the sound of a mosquito flying about three meters away from the ear. These converted electrical pulses are transmitted instantaneously from the outer ear through the inner ear to the auditory cortex of the brain. As with other sensory phenomena, auditory sensations are considered to reach perception only if they are received and processed by a cortical area in the brain. Although we often think of sensory perception as a passive process, the responses of neurons in the auditory cortex of the brain can be strongly modulated by attention (Barbey & Barsalou, 2009).

Beyond this conversion process of external stimuli to auditory perceptions, hearing is the sense that is often identified with our affective experience of participating in events. Unlike our other primary senses, hearing offers unique observational and monitoring capacities that allow us to perceive life’s rhythms and adapt to the “vitality contours” of social events—the affective manner in which social actions are carried out (Rochat, 2013)—as well as of the tempo of human interaction in real time and the “feel” of human contact and communication (Murchie, 1999).

In physiological terms, hearing is a neurological circuitry, part of the vestibular system of the brain, which is responsible for spatial orientation (balance) and temporal orientation (timing), as well as interoception, the monitoring of sensate data and perceptual organization of experience from our internal bodily systems (Tang et al., 2012). Hearing also plays an important role in animating the brain, with particular harmonies, frequencies, and rhythms contributing to calming or overstimulated responses in the brain. Of all our senses, hearing may be said to be the most grounded and most essential to awareness because it occurs in real time, in a temporal continuum. Hearing involves continually grouping incoming sound into pulse-like auditory events that span a period of several seconds (Handel, 2006). Sound perception is about always anticipating what is about to be heard—hearing forward—as well as retrospectively organizing what has just been heard—hearing backward—in order to assemble coherent packages of sound (Carriani & Micheyl, 2012).

While hearing provides a basis for listening, it is only a precursor for it. Though the terms hearing and listening are often used interchangeably in everyday talk, there are essential differences between them. While both hearing and listening are initiated through sound perception, the difference between them is essentially a degree of intention (Roth, 2012). Intention is known to involve several levels, but initially intention is an acknowledgement of a distal source, a willingness to be influenced by this source, and a desire to understand it to some degree (Kriegel, 2013).

In psychological terms, perception creates a representation of these distal objects by detecting and differentiating properties in the energy field (Poeppel et al., 2012). In the case of audition, the energy field is the air surrounding the listener. The listener detects shifts in intensity, which are minute movements in the air, in the form of sound waves, and differentiates their patterns through a fusion of temporal processing in the left cortex of the brain and spectral processing in the right. The perceiver immediately designates the patterns in the sound waves to previously learned categories of emotion and cognition (Brosch et al., 2010; Mattson, 2014).

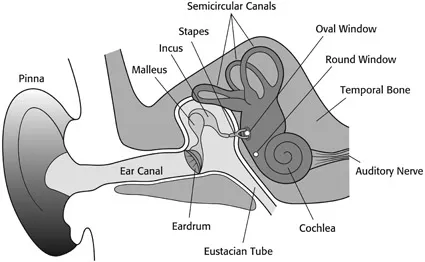

The anatomy of hearing is elegant in its efficiency. The human auditory system consists of the outer ear, the middle ear, the inner ear, and the auditory nerves connecting to the brain stem. Several mutually dependent subsystems complete the system (see Figure 1.1).

The outer ear consists of the pinna, the part of the ear we can see, and the ear canal. The intricate funneling patterns of the pinna filter and amplify the incoming sound, in particular the higher frequencies, and allows us the ability to locate the source of the sound.

Sound waves travel down the canal and cause the eardrum to vibrate. These vibrations are passed along through the middle ear, which is a sensitive transformer consisting of three small bones (the ossicles) surrounding a small opening in the skull (the oval window). The major function of the middle ear is to ensure efficient transfer of sounds, which are still in the form of air particles, to the fluids inside the cochlea, where they will be converted to electrical pulses.

Figure 1.1 The mechanism of hearing. Sound waves travel down the ear canal and cause the eardrum to vibrate. These vibrations are passed along through the middle ear, which is a sensitive transformer consisting of three small bones (malleus, incus, and stapes) surrounding a small opening in the skull (the oval window). The major function of the middle ear is to ensure efficient transfer of sounds, which are still in the form of air particles, to the fluids inside the cochlea (the inner ear), where they will be converted to electrical pulses and passed along the auditory nerve to the auditory cortex in the brain for further processing.

In addition to this transmission function, the middle ear has a vital protective function. The ossicles have tiny muscles that, by contracting reflexively, can reduce the level of sound reaching the inner ear. This reflex action occurs when we are presented with sudden loud sounds such as the thud of a dropped book or the wail of a police siren. This contraction protects the delicate hearing mechanism from damage in the event that the loudness persists. Interestingly, the same reflex action also occurs automatically when we begin to speak. In this way the ossicles’ reflex protects us from receiving too much feedback from our own speech and thus becoming distracted by it.

The cochlea is the focal structure of the ear in auditory perception. The cochlea is a small bony structure, about the size of an adult thumbnail, that is narrow at one end and wide at the other. The cochlea is filled with fluid, and its operation is fundam...