![]()

PART I

Aural Awareness

![]()

CHAPTER 1

Hearing and Listening

Hearing

Information: The Mechanics of Hearing

The Ear–Brain Connection

Machine Listening

Listening

Listening Modes

Listening Situations

Comments from the Case Studies

Food for Thought

Projects

Project 4 (Short): Listen, Listen

Project 5 (Medium): Soundwalk

Project 6 (Long): Soundscape Composition

Further Reading

Suggested Listening

HEARING

Hearing is the means whereby we listen. The threshold of normal hearing is 20 dB. The lived experience of hearing, however, is not at the threshold, but rather within a given range. For example, human speech occupies a zone roughly between 200 and 4000 Hz and between 20 and 65 dB. A barking dog registers approximately 75 dB and a road drill more than 120 dB. A piano is roughly 80–90 dB within a frequency range of 27.5–4186 Hz.

The full complexity of the act of hearing is best appreciated when taken out of the laboratory and analyzed in the real world. The ‘cocktail-party effect’ is a good example: listening to a single person’s speech in a room full of loud chatter or subconsciously monitoring the sounds around so that when someone mentions something significant, the ear can immediately ‘tune in’ and listen. This is an example of selective attention and is normally done with relatively little apparent effort, but, in fact, it is a highly complex operation. First of all, the desired sounds must be separated from what is masking them.1 This is achieved by using both ears together to discriminate. Second, the words must be connected into a perceptual stream. This is done using a process called auditory scene analysis, which groups together sounds that are similar in frequency, timbre, intensity and spatial or temporal proximity. The ears and the brain together act as a filter on the sound.

However, the ears are not the only way to register sound. In an essay on her website, the profoundly deaf percussionist Evelyn Glennie discusses how she hears:

She goes on to explain that she can distinguish pitches and timbres using bodily sensation. This can be equally true when there is a direct connection to the human body. A good example is bone-conduction hearing devices, which are designed for people who cannot benefit from normal in-ear hearing aids. These are mounted directly into the skull and sound is translated into vibrations that can be detected by the inner ear.

Some digital musicians have taken this idea a stage further, to the extent of exploring the sonic properties of the human body itself. Case study musician Atau Tanaka works with his body in this way. He comments:

INFORMATION

INFORMATION: THE MECHANICS OF HEARING

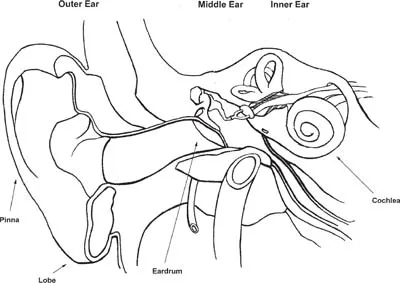

The human ear is divided into three sections. The outer ear comprises the external flap (pinna) with a central depression and the lobe. The various furrows and ridges in the outer ear help in identifying the location and frequency content3 of incoming sounds, as can be verified by manipulating the ear while listening. This is further enhanced by the acoustic properties of the external canal (sometimes called the auditory canal), which leads through to the eardrum, and resonates at approximately 4 kilohertz (kHz). The final stage of the outer ear is the eardrum, which converts the variations in air pressure created by sound waves into vibrations that can be mechanically sensed by the middle ear.

The middle ear comprises three very small bones (the smallest in the human body), whose job is to transmit the vibrations to the inner ear. This contains various canals that are important for sensing balance and the cochlea, a spiral structure that registers sound. Within the cochlea lies the organ of Corti, the organ of hearing, which consists of four rows of tiny hair cells or cilia sitting along the basilar membrane.

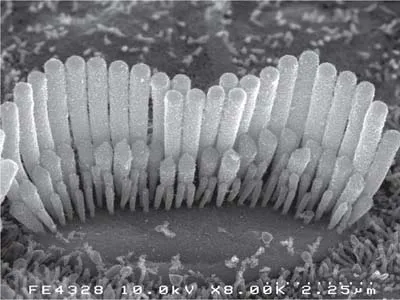

Figure 1.4 shows the sensory hair bundle of an inner hair cell from a guinea pig’s hearing organ in the inner ear. There are three rows of outer hair cells (OHCs) and one row of inner hair cells (IHCs). The IHCs are sensory cells, converting motion into signals to the brain. The OHCs are more ‘active’, receiving neural inputs and converting them into motion. The relationship between the OHCs and the IHCs is a kind of feedback: an electromechanical loop that effectively amplifies the sound. Vibrations made by sound cause the hairs to be moved back and forth, alternately stimulating and inhibiting the cell. When the cell is stimulated, it causes nerve impulses to form in the auditory nerve, sending messages to the brain.

The cochlea has approximately 2.75 turns in its spiral and measures roughly 35 millimeters. It gradually narrows from a wide base (the end nearest the middle ear) to a thin apex (the furthest tip). High-frequency responsiveness is located at the base of the cochlea and the low frequencies at the apex. Studies4 have shown that the regular spacing of the cilia and their responses to vibrations of the basilar membrane mean that a given frequency f and another frequency f × 2 are heard to be an octave apart. Some of the most fundamental aspects of musical perception, such as pitch, tuning and even timbre, come down to this physical fact.

The Ear–Brain Connection

The scientific study of hearing is called audiology, a field that has made great advances in recent years, especially with the advent of new technologies such as digital hearing aids and cochlear implants. For musical purposes, an awareness of the basic aspects of hearing is usually sufficient, but a deeper investigation of the human auditory system can be inspiring and even technically useful. In particular, experiments with sound localization and computer modeling of spatial effects derive to some extent from these understandings.

The way in which the brain deals with the acoustical information transmitted from the ear is still not fully understood. The study of this part of the hearing process ranges across several disciplines, from psychology to neuroscience or, more precisely, cognitive neuroscience.5 The latter uses biological findings to build a picture of the processes of the human brain, from the molecular and cellular levels through to that of whole systems. In practice, neuroscience and psychology are often combined and interlinked.

To simplify considerably: the cilia transmit information by sending signals to the auditory cortex, which lies in the lower-middle area of the brain. Recent techniques such as PET scans6 and Functional MRI scans7 have enabled scientists to observe brain activity while hearing, seeing, smelling, tasting and touching. The locations of hearing and seeing activity, for example, are quite different, and in fact are even more complex than this implies, because different types of hearing stimulate different parts of the brain. Music itself can stimulate many more areas than just those identified with different types of hearing, as emotions, reasoning and all the other facets of human thinking are brought into play.

Machine Listening

Various attempts to build computer models of the auditory system have had some impact on digital music. Computational auditory scene analysis, for example, tries to extract single streams of sound from more complex polyphonic layers, which has had consequences for perceptual audio coding, such as in compression algorithms like mp3. Feature extraction techniques have e...