- 392 pages

- English

- ePUB (mobile friendly)

- Available on iOS & Android

eBook - ePub

Digital Interface Handbook

About this book

A digital interface is the technology that allows interconnectivity between multiple pieces of equipment. In other words hardware devices can communicate with each other and accept audio and video material in a variety of forms.

The Digital Interface Handbook is a thoroughly detailed manual for those who need to get to grips with digital audio and video systems. Francis Rumsey and John Watkinson bring together their combined experience to shed light on the differences between audio interfaces and show how to make devices 'talk to each' in the digital domain despite their subtle differences. They also include detailed coverage of all the regularly used digital video interfaces.

New information included in this third edition: dedicated audio interfaces, audio over computer network interfaces and revised material on practical audio interfacing and synchronisation.

Tools to learn more effectively

Saving Books

Keyword Search

Annotating Text

Listen to it instead

Information

1

Introduction to Interfacing

1.1 The Need for Digital Interfaces

1.1.1 Transparent Links

Digital audio and video systems make it possible for the user to maintain a high and consistent sound or picture quality from beginning to end of a production. Unlike analog systems, the quality of the signal in a digital system need not be affected by the normal processes of recording, transmission or transferral over interconnects, but this is only true provided that the signal remains in the digital domain throughout the signal chain. Converting the signal to and from the analog domain at any point has the effect of introducing additional noise and distortion, which will appear as audible or visual artefacts in the programme material. Herein lies the reason for adopting digital interfaces when transferring signals between digital devices – it is the means of ensuring that the signal is carried ‘transparently’, without the need to introduce a stage of analog conversion. It enables the receiving device to make a ‘cloned’ copy of the original data, which may be identical numerically and temporally.

1.1.2 The Need for Standards

The digital interface between two or more devices in an audio or video system is the point at which data is transferred. Digital interconnects allow programme data to be exchanged, and they may also provide a certain capacity for additional information such as ‘housekeeping data’ (to inform a receiver of the characteristics of the programme signal, for example), text data, subcode data replayed from a tape or disk, user data, communications channels (e.g. low quality speech) and perhaps timecode. These applications are all covered in detail in the course of this book. Standards have been developed in an attempt to ensure that the format of the data adheres to a convention and that the meaning of different bits is clear, in order that devices may communicate correctly, but there is more than one standard and thus not all devices will communicate with each other. Furthermore, even between devices using ostensibly the same interface there are often problems in communication due to differences in the level or completeness of implementation of the standard, the effects of which are numerous. Older devices may have problems with data from newer devices or vice versa, since the standard may have been modified or clarified over the years.

As digital audio and video systems become more mature the importance of correct communication also increases, and the evidence is that manufacturers are now beginning to take correct implementation of interface standards more seriously, since more people are adopting fully digital signal chains. But it must be said that at the same time the applications of such technology are becoming increasingly complicated, and the additional data which accompanies programme data on many interfaces is becoming more comprehensive – there being a wide range of different uses for such data. Thus manufacturers must decide the extent to which they implement optional features, and what to do with the data which is not required or understood by a particular device. Eventually it is likely that digital interface receivers will become more ‘intelligent’, such that they may analyse the incoming data and adapt so as to accommodate it with the minimum of problems, but this is rare in today’s systems and would currently add considerably to the cost of them.

1.1.3 Digital Interfaces and Programme Quality

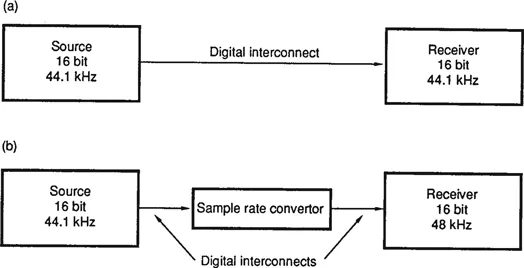

To say that signal quality cannot be affected provided that the signal remains in the digital domain is a bold statement and requires some qualification, since there will be cases where signal processing in the digital chain may affect quality. The statement is true if it is possible to assume that the sampling rate of the signal, its resolution (number of bits per sample) and the method of quantization all remain unchanged (see Chapter 2). Further one must assume that the signal has not been subjected to any processing, since filtering, gain changing, and other such operations may introduce audible or visual side effects. Operations such as sampling frequency conversion and changes in resolution (say, between 20 and 16 bits per sample) may also introduce artefacts, since these can never be ‘perfect’ processes. Therefore an operation such as copying a signal digitally between two recording systems with the same characteristics is a transparent process, resulting in absolutely no loss of quality (see Figure 1.1), but copying between two recorders with different sampling rates via a sampling frequency convertor is not (although the side effects in most cases are very small).

Figure 1.1 (a) A ‘clone’ copy may be made using a digital interconnect between two devices operating at the same sampling rate and resolution. (b) When sampling parameters differ, digital interconnects may still be used, such as in this example, but the copy will not be a true ‘clone’ of the original.

Confusion arises when users witness a change in sound or picture quality even in the former of the above two cases, leading them to suggest that digital copying is not a transparent process, but the root of this problem is not in the digital copying process – it is in the digital-to-analog conversion process of the device which the operator is using to monitor the signal, as discussed in greater detail in Chapter 2. It is true that timing instabilities and (occasionally) errors may arise when signals are transferred digitally between devices, but both of these are normally correctable or avoidable within the digital domain. Since data errors are extremely rare in digitally interfaced systems, it is timing instabilities which will have the most likely effect on the convertor. Poor quality clock recovery in the receiver and lack of timebase correction in the convertor often allow timing instabilities resulting from the digital interface to affect programme quality when it is monitored in the analog domain. This does not mean that the digital programme itself is of poor quality, simply that the convertor is incapable of rejecting the instability. Although it is difficult to ensure low jitter clock recovery in receivers, especially with the stability required for very high convertor resolutions (e.g. 20 bits in audio), it is definitely here that the root of the problem lies and not really in the nature of digital signals themselves, since it is possible to correct such instabilities with digital signals but not normally possible with analog signals. This is discussed further in Chapter 2 and in section 6.4.3, and for additional coverage of these topics the reader is referred to Rumsey 1 and Watkinson2,3.

1.2 Analog and Digital Communication Compared

Before going on to examine specific digital audio and video interfaces it would be useful briefly to compare analog and digital interfaces in general, and then to look at the basic principles of digital communication.

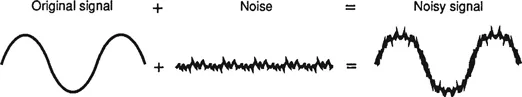

In an analog wire link between two devices the baseband audio or video signal from a transmitter is carried directly in the form of variations in electrical voltage. Any unwanted signals induced in the wire, such as radio frequency interference (RFI) or mains hum will be indistinguishable from the wanted signal at the receiver, as will any noise or timing instability introduced between transmitter and receiver, and these are likely to affect signal quality (see Figure 1.2). Techniques such as balancing (see section 1.7.1) and the use of transmission lines (see section 1.7.3) are used in analog communication to minimize the effects of long lines and interference. Forms of modulation are used in analog links, especially in radio frequency transmission, whereby the baseband (unmodulated) signal is used to alter the characteristics of a high frequency carrier, resulting in a spectrum with a sideband structure around the carrier. Modulation may give the signal increased immunity to certain types of interference4, but modulated analog signals are rarely carried over wire links within a studio installation.

Figure 1.2 If noise is added to an analog signal the result is a noisy signal. In other words, the noise has become a feature of the signal which may not be separated from it.

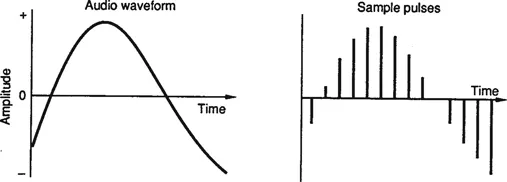

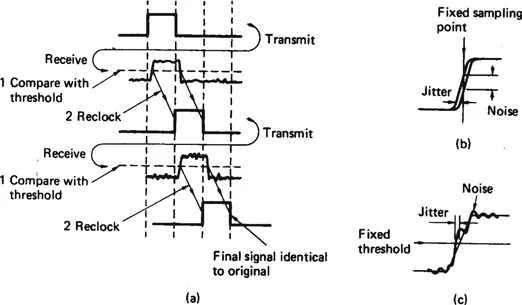

Pulse amplitude modulation (PAM) is a means of modulating an analog signal onto a series of pulses, such that the amplitude of the pulses varies according to the instantaneous amplitude of the analog signal (see Figure 1.3) and this is the basis of pulse code modulation (PCM) whereby the amplitude of PAM pulses is quantized, resulting in a binary signal that is exceptionally immune to noise and interference since it only has two states. This process normally takes place in the analog-to-digital (A/D) convertor of an audio or video system, described in more detail in Chapter 2. PCM is the basis of all current digital audio and video interfaces, and, as shown in Figure 1.4, the effects of unwanted noise and timing instability may be rejected by reclocking the binary signal and comparing it with a fixed threshold. Provided that the receiving device is able to distinguish between the two states, one and zero, and can determine the timing slot in which each binary digit (bit) resides, the system may be shown to be able to reject any adverse effects of the link. Over long links a digital signal may be reconstructed regularly, in order to prevent it from becoming impossibly distorted and difficult to decode. Of course there will be cases in which an interfering signal will cause a sufficiently large effect on the digital signal to prevent it from being correctly reconstructed, but below the threshold at which this happens there will be no effect at all.

Figure 1.3 In pulse amplitude modulation (PAM) a regular chain of pulses is amplitudemodulated by the baseband signal.

Figure 1.4 (a) A binary signal is compared with a threshold and reclocked on receipt, thus the meaning will be unchanged. (b) Jitter on a signal can appear as noise with respect to fixed timing. (c) Noise on a signal can appear as jitter when compared with a fixed threshold.

Compared with an analog baseband signal, its digital counterpart normally requires a considerably greater bandwidth, as can be seen from the diagrams above, but the advantage of a digital signal is that it can normally survive over a channel with a relatively low signal-to-noise ratio. Another advantage of digital communications is that a number of signals of different types may be carried over the same physical link without interfering with each other, since they may be time-division multiplexed (TDM), as discussed in section 1.5.5.

1.3 Quantization, Binary Data and Word Length

When a PAM pulse is quantized it is converted into a PCM word of a certain length or resolution. The number of bits in the word determines the accuracy with which the original analog signal may be represented – a larger number of bits allowing more accurate quantization, resulting in lower noise and distortion. This process is covered further in Chapter 2, and thus will not be discussed further here; suffice it to say that for digital audio word lengths of up to 24 bits may be considered necessary for high sound quality, whereas for digital video a smaller number of bits per sample (typically 8–10) is adequate. Since the number of bits per sample is related directly to the signal-to-noise (S/N) ratio of the system it can be deduced that the S/N ratio required for high quality sound is greater than that required for high quality pictures.

Although the resolution of audio samples may be greater than that of video samples, the sampling rate of a video system is much higher than that required for audio. Thus the total amount of data required per second to represent a moving video picture is considerably greater than that required to represent a sound signal. (In all cases it is assumed that no form of data reduction is used.) This has important implications when considering the requirements for different types of digital...

Table of contents

- Cover

- Half Title

- Title Page

- Copyright

- Contents

- Chapter 1: Introduction to interfacing

- Chapter 2: An introduction to digital audio and video

- Chapter 3: Digital transmission

- Chapter 4: Dedicated audio interfaces

- Chapter 5: Carrying real-time audio over computer interfaces

- Chapter 6: Practical audio interfacing

- Chapter 7: Digital video interfaces

- Chapter 8: Practical video interfacing

- Index

Frequently asked questions

Yes, you can cancel anytime from the Subscription tab in your account settings on the Perlego website. Your subscription will stay active until the end of your current billing period. Learn how to cancel your subscription

No, books cannot be downloaded as external files, such as PDFs, for use outside of Perlego. However, you can download books within the Perlego app for offline reading on mobile or tablet. Learn how to download books offline

Perlego offers two plans: Essential and Complete

- Essential is ideal for learners and professionals who enjoy exploring a wide range of subjects. Access the Essential Library with 800,000+ trusted titles and best-sellers across business, personal growth, and the humanities. Includes unlimited reading time and Standard Read Aloud voice.

- Complete: Perfect for advanced learners and researchers needing full, unrestricted access. Unlock 1.4M+ books across hundreds of subjects, including academic and specialized titles. The Complete Plan also includes advanced features like Premium Read Aloud and Research Assistant.

We are an online textbook subscription service, where you can get access to an entire online library for less than the price of a single book per month. With over 1 million books across 990+ topics, we’ve got you covered! Learn about our mission

Look out for the read-aloud symbol on your next book to see if you can listen to it. The read-aloud tool reads text aloud for you, highlighting the text as it is being read. You can pause it, speed it up and slow it down. Learn more about Read Aloud

Yes! You can use the Perlego app on both iOS and Android devices to read anytime, anywhere — even offline. Perfect for commutes or when you’re on the go.

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Yes, you can access Digital Interface Handbook by John Watkinson,Francis Rumsey in PDF and/or ePUB format, as well as other popular books in Languages & Linguistics & Communication Studies. We have over one million books available in our catalogue for you to explore.