- 336 pages

- English

- ePUB (mobile friendly)

- Available on iOS & Android

eBook - ePub

About this book

The world of smart shoes, appliances, and phones is already here, but the practice of user experience (UX) design for ubiquitous computing is still relatively new. Design companies like IDEO and frogdesign are regularly asked to design products that unify software interaction, device design and service design -- which are all the key components of ubiquitous computing UX -- and practicing designers need a way to tackle practical challenges of design. Theory is not enough for them -- luckily the industry is now mature enough to have tried and tested best practices and case studies from the field.

Smart Things presents a problem-solving approach to addressing designers' needs and concentrates on process, rather than technological detail, to keep from being quickly outdated. It pays close attention to the capabilities and limitations of the medium in question and discusses the tradeoffs and challenges of design in a commercial environment. Divided into two sections, frameworks and techniques, the book discusses broad design methods and case studies that reflect key aspects of these approaches. The book then presents a set of techniques highly valuable to a practicing designer. It is intentionally not a comprehensive tutorial of user-centered design'as that is covered in many other books'but it is a handful of techniques useful when designing ubiquitous computing user experiences.

In short, Smart Things gives its readers both the "why" of this kind of design and the "how," in well-defined chunks.

- Tackles design of products in the post-Web world where computers no longer have to be monolithic, expensive general-purpose devices

- Features broad frameworks and processes, practical advice to help approach specifics, and techniques for the unique design challenges

- Presents case studies that describe, in detail, how others have solved problems, managed trade-offs, and met successes

Tools to learn more effectively

Saving Books

Keyword Search

Annotating Text

Listen to it instead

Information

Part I

Frameworks

Chapter 1 Introduction

The middle of Moore’s law

The history of technology is a history of unintended consequences, of revolutions that never happened, and of unforeseen disruptions. Take railroads, for instance. In addition to quickly moving things and people around, railroads brought a profound philosophical crisis of timekeeping. Before railroads, clock time followed the sun. “Noon” was when the sun was directly above, and local clock time was approximate. This was accurate enough for travel on horseback or foot, but setting clocks by the sun proved insufficient to synchronize railroad schedules. One town’s noon would be a neighboring town’s 12:02, and a distant town’s 12:36. Trains traveled fast enough that these small changes added up. Arrival times now had to be determined not just by the time to travel between two places, but the local time at the point of departure, which could be based on an inaccurate church clock set with a sundial. The effect was that trains would run at unpredictable times and, with terrifying regularity, crash into each other.

It was not surprising that railroads wanted to have a consistent way to mea-sure time, but what did “consistent” mean? Their attempt to answer this question led to a crisis of timekeeping: Do the railroads dictate when noon is, does the government, or does nature? What does it mean to have the same time in different places? Do people in cities need a different timekeeping method than farmers? The engineers making small steam engines in the early nineteenth century could not possibly have predicted that by the end of the century their invention would lead to a revolution in commerce, politics, geography, philosophy and just about all human endeavors.1

We can compare the last twenty years of computer and networking technology to the earliest days of steam power. Once, giant steam engines ran textile mills and pumped water between canal locks. Miniaturized and made more efficient, steam engines became more dispersed throughout industrial countries powering trains, machines in workplaces, and even personal carriages. As computers shrink, they too are getting integrated into more places and contexts than ever before.

We are at the beginning of the era of computation and data communication embedded in, and distributed through, our entire environment. Going far beyond how we now define “computers,” the vision of ubiquitous computing (see Sidebar: The Many Names of Ubicomp)is of information processing and networking as key components in the design of everyday objects (Figure 1-1) using built-in computation and communication to make familiar tools and environments do their jobs better. It is the underlying (if unstated) principle guiding the development of toys that talk back, clothes that react to the environment, rooms that change shape depending on what their occupants are doing, electromechanical prosthetics that automatically manage chronic diseases and enhance people’s capabilities beyond what is biologically possible, hand tools that dynamically adapt to their user, and (of course) many new ways for people to be bad to each other.2

Figure 1-1 The adidas_1 shoe, with embedded microcontroller and control buttons.

(Courtesy Adidas)

The rest of this chapter discusses why the idea of ubiquitous computing is important now, and why user experience design is key to creating successful ubiquitous computing (ubicomp) devices and environments.

Sidebar: The Many Names of Ubicomp

There are many different terms applied to what I am calling ubiquitous computing (or ubicomp for short). Each term came from a different social and historical context. Although not designed to be complementary, each built on the definitions of those that came before (if only to help the group coining the term identify themselves). I consider them to be different aspects of the same phenomenon:

- Ubiquitous computing refers to the practice of embedding information processing and network communication into everyday, human environments to continuously provide services, information, and communication.

- Physical computing describes how people interact with computing through physical objects, rather than in an online environment or on monolithic, general purpose computers.

- Pervasive computing refers to the prevalence of this new mode of digital technology.

- Ambient intelligence describes how these devices appear to integrate algorithmic reasoning (intelligence) into human-built spaces so that it becomes part of the atmosphere (ambiance) of the environment.

- The Internet of Things suggests a world in which digitally identifiable physical objects relate to each other in a way that is analogous to how purely digital information is organized on the Internet (specifically, the Web).

Of course, applying such retroactive continuity (a term the comic book industry uses to describe the pretense of order grafted onto a disorderly existing narrative) attempts to add structure to something that never had one. In the end, I believe that all of these terms actually reference the same general idea. I prefer to use ubiquitous computing since it is the oldest.

1.1 The hidden middle of Moore’s law

To understand why ubiquitous computing is particularly relevant today, it is valuable to look closely at an unexpected corollary of Moore’s Law. As new information processing technology gets more powerful, older technology gets cheaper without becoming any less powerful.

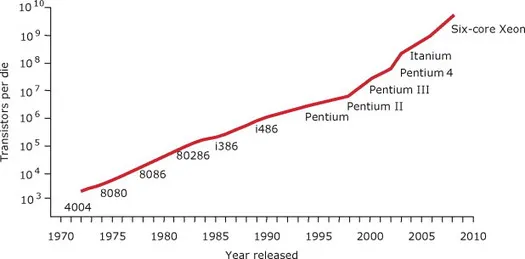

First articulated by Intel Corporation founder Gordon Moore, today Moore’s Law is usually paraphrased as a prediction that processor transistor densities will double every two years. This graph (Figure 1-2) is traditionally used to demonstrate how powerful the newest computers have become. As a visualization of the density of transistors that can be put on a single integrated circuit, it represents the way semiconductor manufacturers distill a complex industry into a single trend. The graph also illustrates a growing industry’s internal narrative of progress without revealing how that progress is going to happen.

Figure 1-2 Moore’s Law.

(Based on Moore, 2003)

Moore’s insight was dubbed a law, like a law of nature, but it does not actually describe the physical properties of semiconductors. Instead, it describes the number of transistors Gordon Moore believed would have to be put on a CPU for a semiconductor manufacturer to maintain a healthy profit margin given the industry trends he had observed in the previous five years. In other words, Moore’s 1965 analysis, which is what his law is based on, was not a utopian vision of the limits of technology. Instead, the paper (Moore, 1965) described a pragmatic model of factors affecting profitability in semiconductor manufacturing. Moore’s conclusion that “by 1975 economics may dictate squeezing as many as 65,000 components on a single silicon chip” is a prediction about how to compete in the semiconductor market. It is more of a business plan and a challenge to his colleagues than a scientific result.

Fortunately for Moore, his model fit the behavior of the semiconductor industry so well that it was adopted as an actual development strategy by most of the other companies in the industry. Intel, which he co-founded soon after writing that article, followed his projection almost as if it was a genuine law of nature and prospered.

The economics of this indus...

Table of contents

- Cover

- Title Page

- Copyright

- Table of Contents

- Preface

- Acknowledgments

- Part I: Frameworks

- Part II: Techniques

- References

- Index

Frequently asked questions

Yes, you can cancel anytime from the Subscription tab in your account settings on the Perlego website. Your subscription will stay active until the end of your current billing period. Learn how to cancel your subscription

No, books cannot be downloaded as external files, such as PDFs, for use outside of Perlego. However, you can download books within the Perlego app for offline reading on mobile or tablet. Learn how to download books offline

Perlego offers two plans: Essential and Complete

- Essential is ideal for learners and professionals who enjoy exploring a wide range of subjects. Access the Essential Library with 800,000+ trusted titles and best-sellers across business, personal growth, and the humanities. Includes unlimited reading time and Standard Read Aloud voice.

- Complete: Perfect for advanced learners and researchers needing full, unrestricted access. Unlock 1.4M+ books across hundreds of subjects, including academic and specialized titles. The Complete Plan also includes advanced features like Premium Read Aloud and Research Assistant.

We are an online textbook subscription service, where you can get access to an entire online library for less than the price of a single book per month. With over 1 million books across 990+ topics, we’ve got you covered! Learn about our mission

Look out for the read-aloud symbol on your next book to see if you can listen to it. The read-aloud tool reads text aloud for you, highlighting the text as it is being read. You can pause it, speed it up and slow it down. Learn more about Read Aloud

Yes! You can use the Perlego app on both iOS and Android devices to read anytime, anywhere — even offline. Perfect for commutes or when you’re on the go.

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Yes, you can access Smart Things by Mike Kuniavsky in PDF and/or ePUB format, as well as other popular books in Computer Science & Computer Science General. We have over one million books available in our catalogue for you to explore.