eBook - ePub

The Path from Biomarker Discovery to Regulatory Qualification

- 206 pages

- English

- ePUB (mobile friendly)

- Available on iOS & Android

eBook - ePub

The Path from Biomarker Discovery to Regulatory Qualification

About this book

The Path from Biomarker Discovery to Regulatory Qualification is a unique guide that focuses on biomarker qualification, its history and current regulatory settings in both the US and abroad. This multi-contributed book provides a detailed look at the next step to developing biomarkers for clinical use and covers overall concepts, challenges, strategies and solutions based on the experiences of regulatory authorities and scientists. Members of the regulatory, pharmaceutical and biomarker development communities will benefit the most from using this book—it is a complete and practical guide to biomarker qualification, providing valuable insight to an ever-evolving and important area of regulatory science.

For complimentary access to chapter 13, 'Classic' Biomarkers of Liver Injury, by John R. Senior, Associate Director for Science, Food and Drug Administration, Silver Spring, Maryland, USA, please visit the following site: http://tinyurl.com/ClassicBiomarkers

- Contains a collection of experiences of different groups taking different types of biomarkers to different levels of qualification and provides insightful case studies of an important area of regulatory science

- Focuses on practical advice, concepts, strategies and overall outcomes to support those working toward biomarker qualification for clinical use

- Offers a valuable resource for members of the regulatory, pharmaceutical and biomarker development communities

Trusted by 375,005 students

Access to over 1.5 million titles for a fair monthly price.

Study more efficiently using our study tools.

Information

Topic

MedicineSubtopic

PharmacologySection 1

Introduction

1 Biomarker Applications in the Pharmaceutical Industry

2 Impact of Biomarker Qualification Regulatory Processes on the Critical Path for Drug Development

3 Regulatory Experience of Biomarker Qualification in the EMA

4 Regulatory Experience at the FDA, EMA, and PMDA

1

Biomarker Applications in the Pharmaceutical Industry

William B. Mattes, PharmPoint Consulting, Poolesville, Maryland, USA

The ‘explosion’ of biomarker research is at least partially a semantic contrivance: as Ian Dews and others have noted; ‘biomarkers’ are not new [1]. Rather, as Dews notes, ‘the word gave a long-overdue name’ to characteristics noted and monitored by the health care profession for at least three millennia for the purposes of diagnosis or prognosis. Indeed, monitoring the pulse for the purpose of assessing the degree of injury is mentioned in the Edwin Smith Papyrus, describing Egyptian medical practice ca. 1500 BCE [2], and uroscopy as a ‘science’ is considered to date from Hippocrates [3]. The often quoted definition of the word, developed by the Biomarkers Definitions Working Group in 2001, is:

‘a characteristic that is objectively measured and evaluated as an indicator of normal biological processes, pathogenic processes, or pharmacologic responses to a therapeutic intervention’ [4].

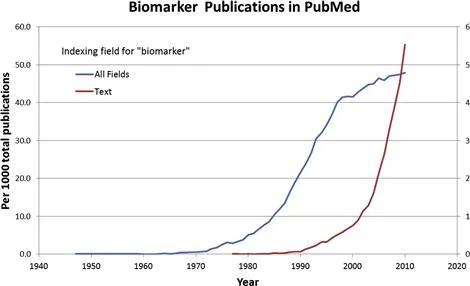

One might debate as to whether early uroscopy and the evaluation of pulse were ‘objectively measured’, yet certainly the definition applies to endpoints described prior to the first published use of the word ‘biomarker’. That distinction goes to a 1977 paper examining the hypothesis that serum ribonuclease levels were a ‘biomarker’ of myeloma tumor cells [5]. However, the first publication in PubMed associated with biomarker as an index term dates to a 1947 study of fetuin-A [6], which more recently has been shown to serve as a ‘biomarker’ of coronary artery disease [7] and neurodegenerative disease [8]. Thus, while there has been an ‘explosion’ of publications using the term ‘biomarker’, it was preceded by an ‘explosion’ of publications examining endpoints indexed as biomarkers (Fig. 1.1), which even in 2009 surpassed by a factor of 10 the number of publications actually using the term. The point is that ‘biomarkers’ have only recently received intentional discussion of their discovery, definition, application, and qualification.

FIGURE 1.1 Biomarker publications indexed in PubMed. Numbers were determined using Alexandru Dan Corlan. Medline trend: automated yearly statistics of PubMed (http://dan.corlan.net/medline-trend.html).

With the advent of technologies that allowed the precise determination of DNA sequence, and gene expression, a new form of biomarker entered the pantheon – the genomic, or pharmacogenomic, biomarker. While genetic disorders such as Down’s syndrome could be characterized on a gross chromosomal level, determination of genetic polymorphisms, i.e., inter-individual variations in the sequence of genes, allowed for the correlation of DNA sequence variations with phenotypes relevant to disease and drug treatment. The problem of anticipating individual disease susceptibility or therapy response seemed to become tractable, as the tools gave clear, binary answers as to whether a given gene locus has a particular DNA sequence. In the 1990s the impact of genetic polymorphisms on drug metabolism in both animals and humans became clear and resulted in considerable research. Combined with the ancillary techniques for determining the individual enzymes responsible for a given drug’s biotransformation in both preclinical species and in humans, pharmacogenomic approaches offered the promise of tailoring drug development programs such as to avoid or minimize the role of inter-individual variation in ADME (absorption, distribution, metabolism and excretion). The interest in the drug development community led to a survey of industry practices [9], an International Conference on Harmonization (ICH) guideline on definitions and sample handling [10], and guidance documents published by both the European Medicines Agency (EMA) [11] and the US Food and Drug Administration (FDA) [12]. The latter document suggests principles for the use of such pharmacogenomic biomarkers in early (i.e., exploratory) clinical studies, such as identifying populations that may require dosing adjustments or groups that might be at high risk for drug–drug interactions. The document also notes that such pharmacogenomic biomarkers may also include those other than for drug ADME, for example genetic variations in the drug target, and early clinical studies could identify those genetic variants most likely to respond to therapy, and use that information for patient enrichment strategies [13] in later clinical trials. The guidance acknowledges that in many cases samples may be collected during a given clinical trial and pharmacogenomic analysis conducted retrospectively to determine possible causes for toxicity or lack of efficacy. Indeed the industry predilection for retrospective, in contrast to prospective, analysis of pharmacogenomic biomarkers is echoed in an analysis of industry practices over the time frame 2003–2008 [14].

The relative caution applied to the application of pharmacogenomic biomarkers may reflect a more general caution in applying relatively novel biomarkers, particularly in regard to clinical trial design. Indeed such designs that can incorporate biomarker analysis as a variable in addition to the treatment variable have been the subject of many publications [15–18]. However, as noted above, ‘biomarkers’ have been discovered and applied for millennia, and their use qualified through a variety of approaches. While it is not the intent of this chapter to discuss qualification, it is the intent of this book to present examples of the approaches that have recently been used to do that.

The history of biomarkers reflects the inherent assumption that any given biomarker was almost certainly suitable for only a limited number of uses or applications. Such applications have been reviewed and classified in many publications and books, but for the purposes of this book it is worthwhile to consider the various types of biomarkers and applications, as these classifications have impact on the approaches taken toward qualifying a type of biomarker for a type of application.

Before considering the various types of biomarkers and their applications, it is appropriate to address the one application that may be considered ‘the elephant in the room’ – surrogate endpoints. The Biomarkers Definitions Working Group defined a ‘surrogate endpoint’ as:

‘A biomarker that is intended to substitute for a clinical endpoint. A surrogate endpoint is expected to predict clinical benefit (or harm or lack of benefit or harm) based on epidemiologic, therapeutic, pathophysiologic, or other scientific evidence.’ [4]

Indeed a ‘clinical endpoint’ is ‘a characteristic or variable that reflects how a patient feels, functions, or survives’ [4]. As such, clinical endpoints such as overall survival (OS, the time from randomization to death from any cause), are regarded as the most rigorous and credible measures of clinical benefit from therapeutic intervention. On the other hand, such clinical endpoints generally dictate a large study sample size and duration, and are influenced by multiple factors [19,20]. Conceptually a surrogate endpoint is an endpoint that responds to therapeutic intervention in a shorter time frame and/or with a smaller sample size than the clinical endpoint which it is substituting for. Thus a surrogate endpoint serves to accelerate the drug development and regulatory registration processes [4,21–23]. Such an application makes regulatory acceptance of a biomarker as a surrogate endpoint highly desirable, 1) to the pharmaceutical industry as a means of both reducing cost and time to market, and 2) to patient advocates as a means for bringing promising therapies into practice sooner [24]. However, an effect on a surrogate endpoint usually is not in and of itself a benefit to the patient; rather the value of a surrogate endpoint response is in its connection to a subsequent clinical outcome [25]. As one clear example of a surrogate endpoint, accepted by both clinicians and regulators, blood pressure has been convincingly shown to be associated with cardiovascular disease risk in numerous epidemiological studies. Importantly, blood pressure responds to therapeutic interventions that improve cardiovascular clinical endpoints (e.g., reduce incidence of stroke) [25]. Adding confidence to the use of blood pressure as a surrogate endpoint is its response to interventions of several different types, including calcium channel blockers, diuretics and angiotension-converting enzyme inhibitors. Such a volume of supporting data is often not available at the time a biomarker is proposed as a surrogate endpoint. Statistical approaches to confirm a biomarker as a surrogate endpoint were proposed by Prentice in 1989 [26] and continue to evolve [27], but more often than not, biomarkers are suggested for use as surrogate endpoints on the basis of correlations and mechanistic assumptions. The reliance on correlation has been called into question [28]. Recently, examples where surrogate endpoints have been used to guide clinical trials, but failed to accurately anticipate clinical outcomes [29] have led to skepticism and concern over their use [30], particularly in an accelerated drug approval process [22]. Hence, the subject of biomarkers as surrogate endpoints remains both attractive and controversial. Given the important need to develop treatments for chronic and debilitating conditions such as Alzheimer’s disease and chronic obstructive pulmonary disease, the slow progression of these diseases, and the lack of satisfactory clinical endpoints [20,31], the debate over the best process for efficiently identifying a biomarker as a surrogate endpoint will certainly continue.

For the most part there are three broad areas of applications biomarkers have in both drug development and clinical practice: diagnosis, prognosis and intervention management. Diagnosis is concerned with determining the current state of the subject and the existence, extent and characteristics of any disease condition. From a temporal standpoint, diagnosis is focused on the present, and as such diagnostic biomarkers can be benchmarked against other concurrent observations. Prognosis involves a prediction of the probable course and/or outcome of a disease, or risk of future disease in an otherwise healthy individual. Both of these applications invoke elements of both time and chance, i.e., probability. The multifactorial nature of causality for most diseases makes their development from any given point in time a stochastic process [32], clouding the relationship between any given observation or factor and a diagnosis of disease at a later point in time. While prognostic biomarkers are vitally important, the elements of time and probability make their qualification and application problematic. Indeed, considerable debate and research is ongoing over the methodological and statistical approaches for qualifying predictive/prognostic biomarkers [33–37], highlighting the challenges in their application. The third application of biomarkers, intervention management, may actually be an extension of the use of diagnostic and prognostic markers, but is worth considering as distinct, as the characteristics of intervention are driven by prior diagnostic and/or prognostic tests.

Diagnostic Applications

Single and Multiplex Biomarkers

As noted, diagnosis is the determination of an existing state or its characteristics. Such ‘states’ could include pathobiology, disease, toxicity or adverse reaction, or exposure to an environmental agent. In clinical practice, diagnosis is usually approached in response to symptoms that a patient presents, such as fatigue or unexplained weight loss. In some cases, a single biomarker may suffice to enable the diagnosis. Thus, a blood glucose level of 200 mg/dL or higher, plus the presence of the previously mentioned symptoms, is strongly diagnostic for diabetes [38]. Similarly detection of the exotoxin produced by toxigenic strains of Corynebacterium using an enzyme immunoassay serves as rapid diagnosis for serious diphtheria infections [39]. In the case of heavy metals such as cadmium, exposure can be diagnosed by direct measurement of the element in urine, blood or tissue [40]. More commonly, a patient’s symptoms or condition can be the result of many different etiologies; proper understanding and treatment requires ‘differential diagnosis’ with the use of multiple biomarkers designed to rule out one or more of these etiologies...

Table of contents

- Cover image

- Title page

- Table of Contents

- Copyright

- Contributors

- Preface

- Section 1: Introduction

- Section 2: Biomarker Development and Qualification in the Pharmaceutical Industry

- Section 3: Toxicogenomic Biomarkers

- Section 4: Biomarkers of Drug Safety

- Section 5: Consortia

- Section VI: Path to Regulatory Qualification Process Development

- Index

Frequently asked questions

Yes, you can cancel anytime from the Subscription tab in your account settings on the Perlego website. Your subscription will stay active until the end of your current billing period. Learn how to cancel your subscription

No, books cannot be downloaded as external files, such as PDFs, for use outside of Perlego. However, you can download books within the Perlego app for offline reading on mobile or tablet. Learn how to download books offline

Perlego offers two plans: Essential and Complete

- Essential is ideal for learners and professionals who enjoy exploring a wide range of subjects. Access the Essential Library with 800,000+ trusted titles and best-sellers across business, personal growth, and the humanities. Includes unlimited reading time and Standard Read Aloud voice.

- Complete: Perfect for advanced learners and researchers needing full, unrestricted access. Unlock 1.5M+ books across hundreds of subjects, including academic and specialized titles. The Complete Plan also includes advanced features like Premium Read Aloud and Research Assistant.

We are an online textbook subscription service, where you can get access to an entire online library for less than the price of a single book per month. With over 1.5 million books across 990+ topics, we’ve got you covered! Learn about our mission

Look out for the read-aloud symbol on your next book to see if you can listen to it. The read-aloud tool reads text aloud for you, highlighting the text as it is being read. You can pause it, speed it up and slow it down. Learn more about Read Aloud

Yes! You can use the Perlego app on both iOS and Android devices to read anytime, anywhere — even offline. Perfect for commutes or when you’re on the go.

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Yes, you can access The Path from Biomarker Discovery to Regulatory Qualification by Federico Goodsaid,William B. Mattes in PDF and/or ePUB format, as well as other popular books in Medicine & Pharmacology. We have over 1.5 million books available in our catalogue for you to explore.