![]()

PART A

Basic/Standard GMDH

![]()

Chapter 1

INTRODUCTION

Godfrey C. Onwubolu

Group Method of Data Handling (GMDH)[1] is a family of algorithms for computer-based mathematical modeling and structural identification. Most of GMDH algorithms are characterized by inductive self-organizing procedure used for obtaining multi-parametric model. Specific behavior characteristics of GMDH enabled its successful use in such fields as data mining, knowledge discovery, forecasting, complex systems modeling, optimization and pattern recognition.

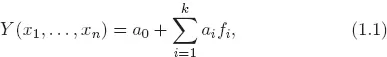

It is supposed that an object investigated with GMDH is represented by multiple inputs and at least one output. Also it is supposed that the object can be modeled by a certain subset of components of the base function (1.1):

where x are inputs, Y is output, a are coefficients, f are elementary functions dependent on different sets of inputs, k is the number of base function components.

GMDH algorithm has to consider some partial models — component subsets of the base function (1.1) and choose an optimal model structure that is indicated by the minimum value of an external criterion. The main advantage derived from such a procedure is that the identified model has an optimal complexity adequate to the level of noise in the input data (noise resistant modeling).

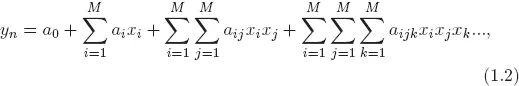

The relationship between the inputs and the output of a multiple inputs single output self-organizing network can be represented by an infinite Volterra–Kolmogorov–Gabor (VKG) polynomial of the form [1]:

where X = (x1, x2, . . . , xM) is the vector of input variables and A = (a0, ai, aij, aijk, . . .) is the vector of coefficients or weights.

This is the discrete-time analogue of a continuous time Volterra series and can be used to approximate any stationary random sequence of physical measurements. Ivakhnenko showed that the VKG series can be expressed as a cascade of second-order polynomials using only pairs of variables [1, 2]. The corresponding network can be constructed from simple polynomial and delay elements. As the learning procedure evolves, branches that do not contribute significantly to the specific output can be pruned, thereby allowing only the dominant causal relationship to evolve. The multilayer GMDH network algorithm constructs hierarchical cascades of bivariate activation polynomials in the nodes, and variables in the leaves. The activation polynomial outcomes are fed forward to their parent nodes, where partial polynomial models are made. Thus, the algorithm produces high-order multivariate polynomials by composing simple and tractable activation polynomial allocated in the hidden nodes of the network.

In neural network idiom, the higher-order polynomial networks grown by the GMDH algorithm are essentially feed-forward, multilayered neural networks. The nodes are hidden units, the leaves are inputs, and the activation polynomial coefficients are weights. The weights arriving at a particular hidden node are estimated by ordinary least squares (OLS) fitting.

The very first consideration order used in GMDH and originally called multilayered inductive procedure is the most popular one. Multilayered procedure is equivalent to the Artificial Neural Network with polynomial activation function of neurons. Therefore the algorithm with such an approach is usually referred to as GMDH-type Neural Network or Polynomial Neural Network.

GMDH is a multilayered network with a certain structure determined through training. It has the feature that the nonlinear dynamics are expressed as a mathematical model as well as the polynomial can have higher order terms without instability problems [3]. Furthermore, GMDH is used to detect input–output relationships for various models. It aims to find relationships between one output and a frequently large set of possible inputs. The network decides which of the possible inputs are actually relevant to the system being identified. Therefore, the network is built up layer by layer during training. In each layer, there are neurons with only two inputs; the output of each neuron is a quadratic function of its both inputs. The parameters of the quadratic functions are obtained using linear regression analysis. Before adding a new layer, the previous layer is trained. During this training, for each unique combination of two inputs, a neuron is trained and on the basis of a certain selection criteria, only the best performing neurons are selected. Then, a new layer is added, and the whole procedure of training is performed again on this new layer. Adding new layers is done if some stopping criteria are achieved [4].

Based on this basic algorithm, many developed studies have been done to apply the GMDH approach in various applications such as data mining, forecasting, prediction and system identification, pattern recognition, and fault detection and isolation (FDI). For example, in the system identification filed, Kondo [5] applied GMDH algorithm to the medical image recognition problem. In his study, the shapes of the livers, obtained using stomach X-ray CT image, were recognized automatically and the interests regions were extracted using GMDH algorithm. The utilized image features were the statistics of the image density such as mean, standard d...