![]()

Chapter 1

Introduction

Human faces are among the most important subjects of image processing, computer vision and graphics. Facial visual signal processing has been one of the top important research topics in the computer vision community. Research on face signal analysis and synthesis spans a broad range of modalities and methodologies including static frames and dynamic sequences of color images, depth images and LIDAR data. The applications of facial visual signal processing are also widespread including image enhancement, compression, recognition and animation. Recently, with the breakthroughs of computing infrastructure and social computing platforms, facial visual signal processing techniques are having tremendous opportunities in contributing to virtual reality, social network and remote sensing applications. The combination of facial processing techniques in graphics — such as face animation, and vision — such as expression recognition enables the unprecedented opportunity for remote and virtual interactive services.

Among the newest and most enthusiast adopters of facial processing techniques, modern remote learning platforms are making revolutionary changes in the world of education, especially in the form of Massive Open Online Courses. The interactive features on distance learning systems such as lecture delivering and student monitoring open great potential for providing rich, effective and efficient education services that surpass both traditional classroom setups and early online learning systems. These features essentially rely strongly on facial signal analysis and synthesis. This connection is at the center of the topics discussed in this book.

1.1 Motivation

The interactions between the participants of remote learning setup can be in two directions. In the presentation direction, the lecture and related content is transferred from the instructor’s side to the students’ side. In the feedback direction, at the students’ side, the behavior and response of the audience will be estimated and conveyed back to the presenter.

In the current remote education platforms, most effort are put in the presentation direction with video streaming and content transferring systems. With the advance of video compression and streaming techniques, the webinar and teleconferencing style systems can deliver real-life video to remote audiences. However, those systems require high-end internetwork connections and do not support interactive content. The modern distance learning platforms need robust, efficient and interactive methods for delivering video, audio and other content to learners with diversified types of devices and networking infrastructure.

In the feedback direction, traditionally students’ participation can only be monitored and responded directly by the instructor. This method is not efficient in the large classroom setup and impossible in remote online lectures. For modern online distant learning systems, effective feedback direction systems which observe and understand the participation of the remote learner is highly desired.

1.2 Overview

This book aims at providing a comprehensive and unified vision of facial image processing. It addresses a collection of state-of-the-art techniques which cover the important areas for facial biometrics and behavior analysis. At the same time, these techniques converge in serving practical applications of interactive distance learning.

We introduced the lecture delivering modules which support seamless 3D reconstruction and streaming of the instructor’s facial motion to students through a photorealistic avatar. The facial structure, texture and motion are captured by a real time tracking algorithm. They are then encoded into a very low bit rate signal and efficiently transferred to a remote site where the full facial avatar is reconstructed and displayed.

At the students’ side, a vision system is designed to observe and understand the participation of the users. We design the student site setup including a screen with the video cast from the instructor and a generic low-cost camera which captures the dynamics of student’s appearance. These visual data are then analyzed by local and distributed vision algorithms for predicting facial expression and measuring the engagement level of the user.

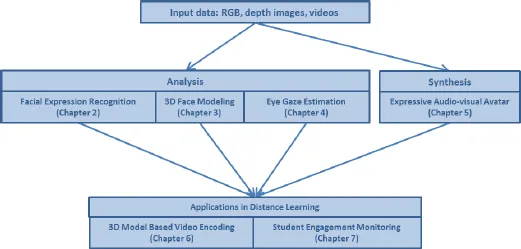

The overview of the book is illustrated in Figure 1.1. In the first half of the book, from Chapter 2 to Chapter 5, we introduce the underlying facial processing techniques including expression recognition, 3D modeling and eye gaze estimation. In the later half, Chapter 6 and Chapter 7, we address the applications of these techniques in distance learning setup, namely model based video coding and student engagement monitoring.

Fig. 1.1: Face processing and applications to distance learning.

![]()

Chapter 2

Facial Expression Recognition

2.1 Introduction

Automated facial expression recognition is beginning to have a sizeable impact in areas ranging from psychology to HCI (human–computer interaction) to HRI (human–robot interaction). For instance, there is an ever increasing demand to make the computers and robots behave more socially. Some example works that employ expression recognition in HCI and HRI are [Corive et al. (2001)] and [Kim et al. (2009)]. Another application for facial expression recognition is in computer-aided automated learning [Zeng et al. (2009)]. Here, the computer should ideally be able to identify the cognitive state of the student and then act accordingly. Say, if the student is not in high spirits, it may tell a joke. Apart from this, it can be very useful for online tutoring, where the instructor can get the affective response of the students as aggregate results in real time.

The increasing applications of expression recognition have invited a great deal of research in this area in the past decade. Psychologists and linguists have various opinions about the importance of different cues in human affect judgment [Zeng et al. (2009)]. But there are some studies (e.g. [Ambady and Rosenthal (1992)]) which indicate that facial expressions in the visual channel are the most effective and important cues that correlate well with the body and voice. This gives the motivation to focus on various face representations for facial expression recognition.

In the following, we review some of the existing literature for facial expression recognition.

2.2 Related Work

Most of the existing approaches do recognition on discrete expression categories that are universal and recognizable across different cultures. These generally include the facial expressions related to the six basic universal emotions: Anger, Fear, Disgust, Sad, Happy and Surprise [Zheng et al. (2012)]. This notion of the universal emotions with a particular prototype expression can be traced back to Ekman and his colleagues [Ekman et al. (1987)]. The availability of the corresponding facial expression databases have also made them popular with the computer vision community. A number of other works also focus on capturing the facial behavior by detecting facial action units1 (AUs) as defined in the Facial Action Coding System (FACS) [Ekman (1993)]. The expression categories can then be inferred from these AUs [Littlewort et al. (2011)].

The choices of features employed for automated facial expression recognition are classified by Zeng et al. [Zeng et al. (2009)] into two main categories: geometric features and appearance features. In this section, we closely follow that taxonomy to review some of the notable works on the topic [Tariq et al. (2012a)].

The geometric features are extracted from the shape or salient point locations of important facial components such as mouth and eyes. In the work of Chang et al. [Chang et al. (2006)], 58 landmark points are used to construct an active shape model (ASM). These are then tracked to do facial expressions recognition. Pantic and Bartlett [Pantic and Bartlett (2007)] introduced a set of more refined features. They utilize facial characteristic points around the mouth, eyes, eyebrows, nose, and chin as geometric features for expre...